Malwarebytes Survey Shows Negative Consumer Sentiment Toward ChatGPTMalwarebytes Survey Shows Negative Consumer Sentiment Toward ChatGPT

The overall message is that people are "deeply uncomfortable" about ChatGPT and generative AI.

Already have an account?

A new Malwarebytes survey shows overall consumer sentiment toward ChatGPT is far from excitement, much less positive.

Malwarebytes conducted a pulse survey of its newsletter readers across the globe late last month via the Alchemer Survey platform. More than 1,400 people responded.

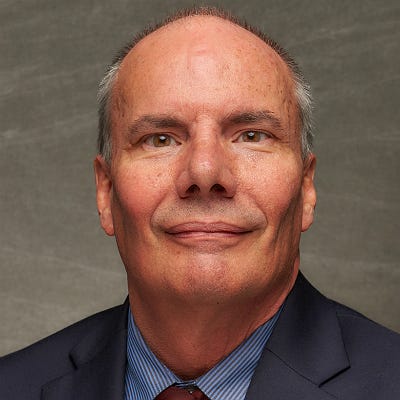

Malwarebytes’ Mark Stockley

“The uncertainty around how ChatGPT will change our lives, and whether it will take our jobs, is compounded by the mysterious way in which it works,” said Mark Stockley, cybersecurity evangelist at Malwarebytes. “It is an unknown quantity to everyone, even its creators. Machine learning (ML) models like ChatGPT are ‘black boxes’ with emergent properties that appear suddenly and unexpectedly as the amount of computing power used to create them increases.”

Consumer Sentiment Centers on Trust

The survey findings indicate consumer sentiment toward ChatGPT revolves around trust. Only 10% surveyed agreed with the following statement: “I trust the information produced by ChatGPT,” while 63% disagreed.

Respondents had a similar sentiment in relation to accuracy, with only 12% agreeing with the statement: “the information produced by ChatGPT is accurate,” while more than one-half disagreed.

Beyond concerns around trust and accuracy, 81% of respondents believe ChatGPT could be a possible safety or security risk, with 52% of respondents calling for a pause on ChatGPT work for regulations to catch up. That echoes similar tech luminary concerns voiced earlier this year.

Scroll through our slideshow above for more about consumer sentiment toward ChatGPT.

Want to contact the author directly about this story? Have ideas for a follow-up article? Email Edward Gately or connect with him on LinkedIn. |

About the Author

You May Also Like